The Core: Human Law Invariants The structural DNA of human stability, decoded from 5,000 years of legal history and reduced to three universal axioms.

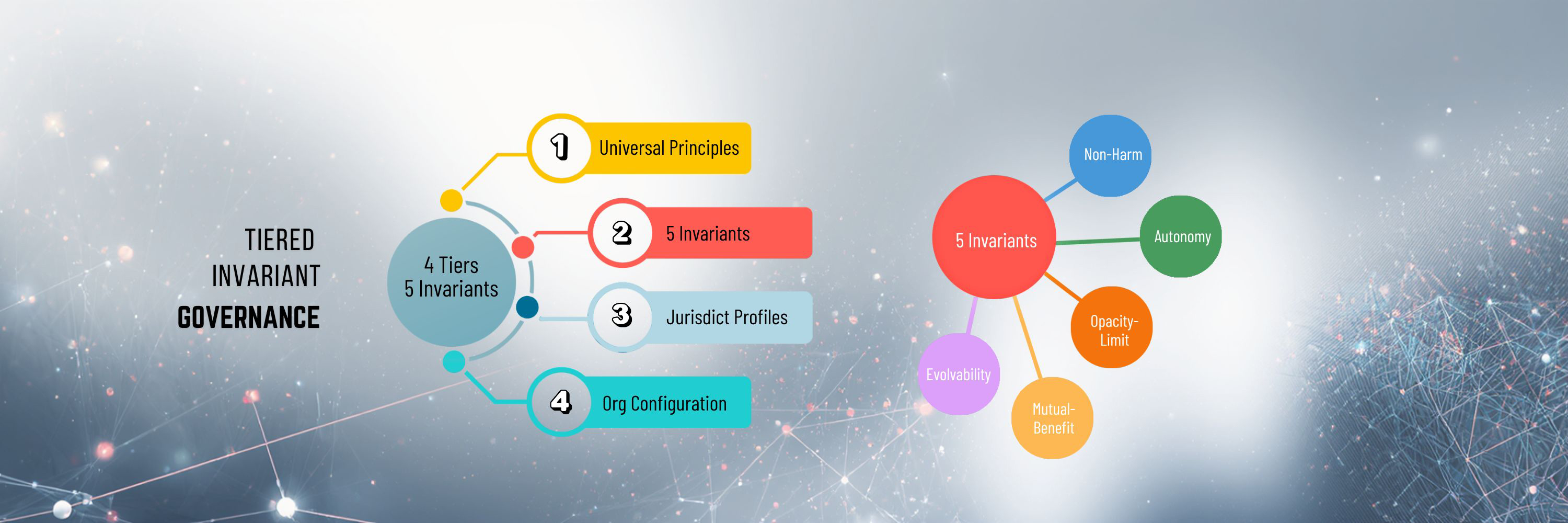

QGI is a tiered, invariant governance architecture that transforms universal principles into deterministic constraints, ensuring AI actions remain safe, transparent, lawful, and stable across contexts.

AI systems now operate in domains where mistakes have direct human impact: healthcare, finance, hiring, education, public services, and critical infrastructure. Existing governance approaches were built for human decision‑makers, not autonomous systems acting across jurisdictions at machine speed. They rely on interpretation rather than enforcement.

QG Invariant Governance (QGI) takes a different path. It is developed on the structural logic summarized in The Core of Human Laws: Harmony, Co‑Existence, and Co‑Expansionthe. These are universal principles across all legal and ethical systems. They describe what governance must preserve for any system to remain stable and ethical.

QGI transforms those principles into a deterministic, tiered governance architecture. Instead of asking whether an output “seems compliant,” QGI evaluates whether an action is permitted to execute under fixed, machine‑enforceable constraints. This shift marks a structural breakthrough in AI governance.

QGI is not a model, a policy, or a set of guidelines. It is a governance architecture, an operating system.

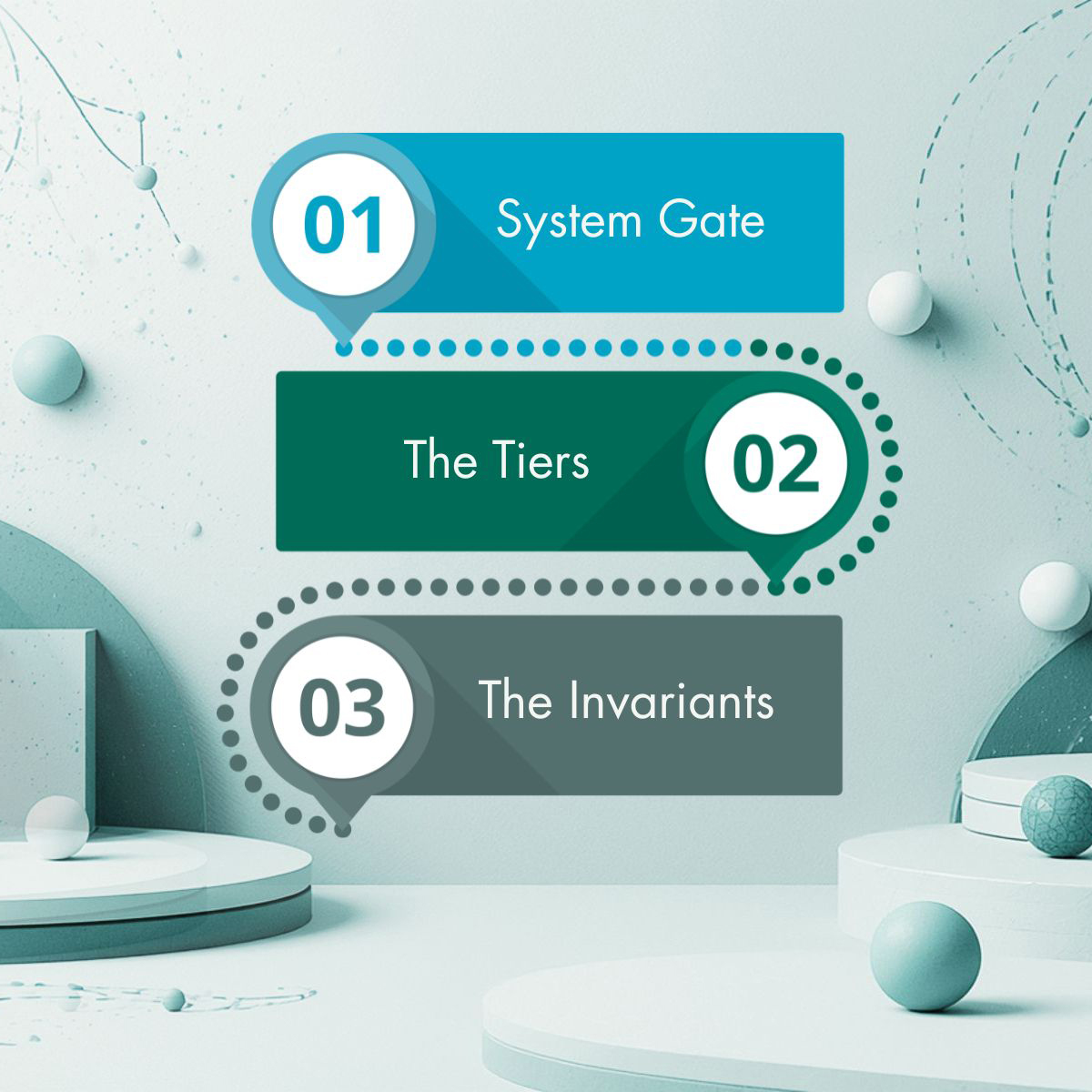

QGI is built as a four‑tier stack. Each tier has a distinct role, and together they create a complete enforcement pathway.

The four tiered AI governance, each tier exists to solve a different structural problem. Together, they turn the three universal principles (Harmony, Co‑Existence, Co‑Expansion) into enforceable, machine‑actionable boundaries. Their objectives are distinct, non‑overlapping, and sequential.

This structure ensures that governance is not an afterthought—it is embedded directly into system execution.

There are total five levels of controls, depending on the real-life requirements. Each level is backed by a detailed parameter framework defining measurable thresholds and system controls.

| Level | Category | Use Case | Governance Focus | Oversight | Transparency |

|---|---|---|---|---|---|

| Level 1 | Minimal Risk | Casual AI, content generation | Speed and efficiency | No | Low |

| Level 2 | Limited Risk | Productivity tools, recommendations | Balance and usability | No | Moderate |

| Level 3 | Sensitive Data | Personalization, profiling | Privacy and user protection | No | Moderate–High |

| Level 4 | High Risk | Finance, legal, regulated systems | Safety, fairness, explainability | Yes | High |

| Level 5 | Critical Risk | Healthcare, hiring, legal decisions | Strict control and accountability | Yes | Full |

As risk increases:

At its foundation, QGI reflects three universal principles:

These principles are not abstract ideals. Within QGI, they are translated into mathematical decision models that resolve conflicts when competing constraints cannot all be satisfied. This ensures consistent, explainable outcomes—even in complex scenarios.

Further, it sets controls at six areas of human laws:

All jurisdictional regulations can be mapped to these areas of protection.

There are also two drift controls that ensure the model staying on track.

Unlike traditional AI governance approaches that rely on guidelines or post-hoc reviews, QGI operates as a constraint-based system:

This makes QGI particularly suitable for high-impact domains, including hiring, healthcare, finance, and public administration.

QGI is designed to integrate with existing public sector systems and workflows:

For jurisdictions such as Ontario and broader Canadian public sectors, QGI provides a practical path to implement responsible AI at scale—without sacrificing efficiency or innovation.

At its core, QGI transforms AI governance from a policy challenge into an engineering discipline. It enables governments to move beyond “trusting AI” to verifying and controlling AI behavior in measurable terms.

This shift is essential for building public trust, ensuring fairness, and supporting sustainable digital transformation across government services.

QGI is not just a framework—it is an operational system for governing intelligence.

The Core: Human Law Invariants The structural DNA of human stability, decoded from 5,000 years of legal history and reduced to three universal axioms.

The Flow: Runtime Enforcement. Experience the mechanics of "Compliance-as-Code"—where deterministic gates prevent violations at machine speed.

The Benefits: Dollars Saved. The architecture reduces governance costs by reducing complexity, code duplication, monitoring overhead, and regulatory update across large AI systems.