The Research: Axiomatic Reduction A formal methodology for stripping away legal complexity to find the irreducible governing constants of any system.

Current AI governance relies on probabilistic safety and post‑hoc oversight, leading to failures, lawsuits, and regulatory gaps. A deterministic governance model is now essential.

AI governance today operates through a patchwork of policies, probabilistic safety techniques, and post‑hoc oversight mechanisms that were never designed for systems capable of autonomous action, rapid scaling, or cross‑domain influence. Most governance frameworks rely on interpretive guidance—ethical principles, best‑practice recommendations, or compliance checklists—rather than enforceable constraints. As a result, governance often functions as an advisory layer rather than a structural one. It can influence behavior, but it cannot guarantee it.

Most contemporary AI governance relies on three mechanisms:

These mechanisms share a common limitation: they depend on interpretation—by humans, by models, or by downstream systems. They do not provide deterministic guarantees. They cannot ensure that an AI system will always remain within safe boundaries, especially under distribution shift, adversarial pressure, or autonomous tool use.

The limitations of probabilistic governance are no longer theoretical. They are visible in real‑world failures across multiple sectors:

These failures have led to lawsuits, regulatory investigations, and public backlash. In many cases, organizations argued that they had “followed responsible AI principles,” yet the systems still caused harm. The gap between principle and practice has become impossible to ignore.

The underlying problem is structural. Current governance frameworks rely on:

This creates systems that can appear compliant during testing but behave unpredictably in deployment. As models become more capable, more autonomous, and more deeply integrated into critical infrastructure, this gap becomes untenable.

Governments are responding with new laws—the EU AI Act, GDPR enforcement actions, FTC investigations, and sector‑specific regulations. Yet these laws assume that AI systems can be governed the way financial systems or medical devices are governed: through enforceable constraints, traceability, and auditability. Current AI architectures cannot meet these expectations because they lack a deterministic governance layer.

This mismatch between regulatory requirements and technical reality is now one of the central tensions in the field.

To govern AI systems that operate at machine speed, across multiple domains, and under evolving regulatory conditions, governance must shift from interpretive to structural. It must move from:

A new model must provide:

Without this shift, AI governance will remain reactive, inconsistent, and legally vulnerable.

If current governance relies on interpretation, and if interpretation cannot guarantee safety, then the central research question becomes:

How do we design a governance system that an AI must obey, rather than one it merely attempts to follow?

This question leads directly into the structural research that underpins QGI: the deep logic of human law, the mathematics of constraint systems, and the reduction of universal principles into deterministic invariants.

The Research: Axiomatic Reduction A formal methodology for stripping away legal complexity to find the irreducible governing constants of any system.

The Core: Human Law Invariants The structural DNA of human stability, decoded from 5,000 years of legal history and reduced to three universal axioms.

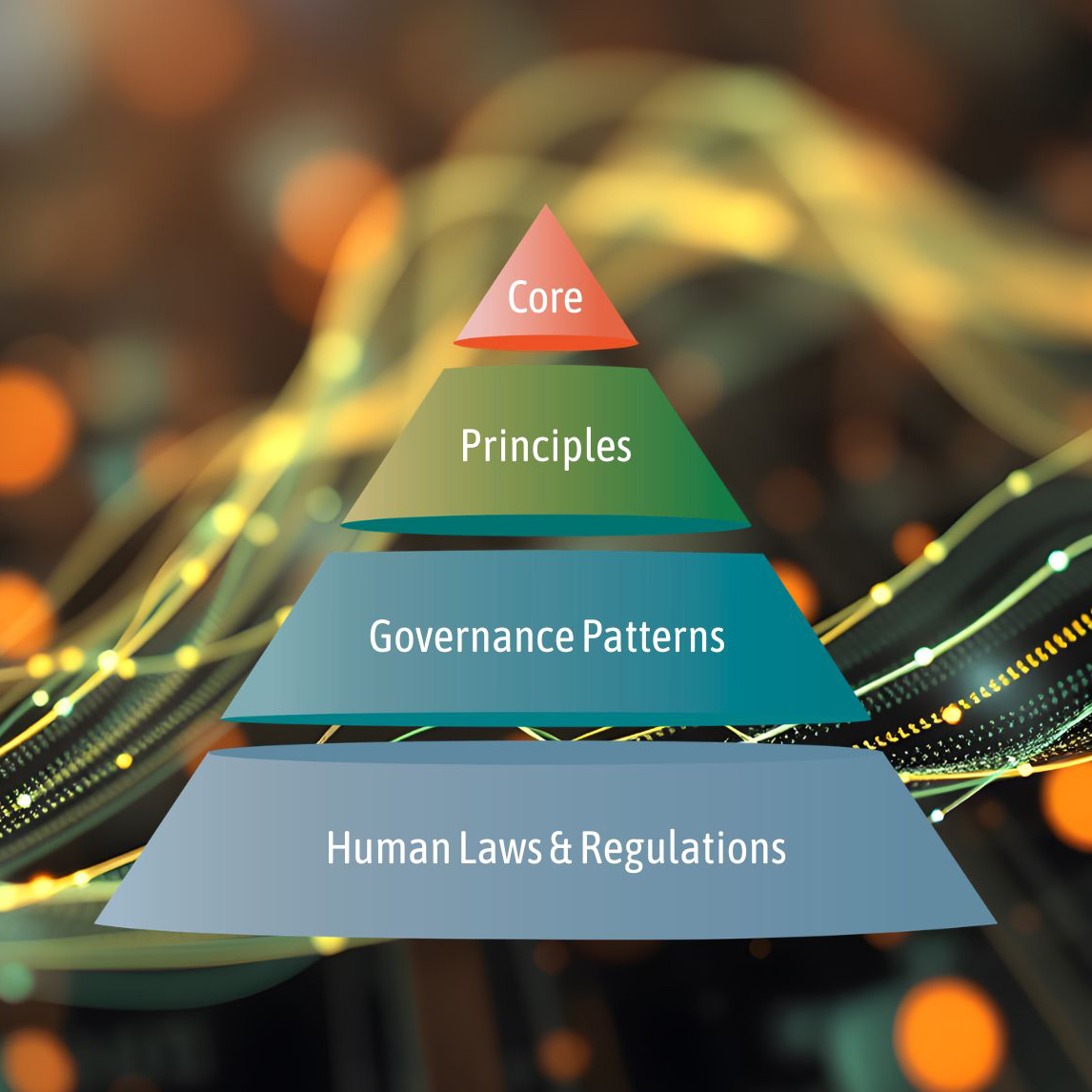

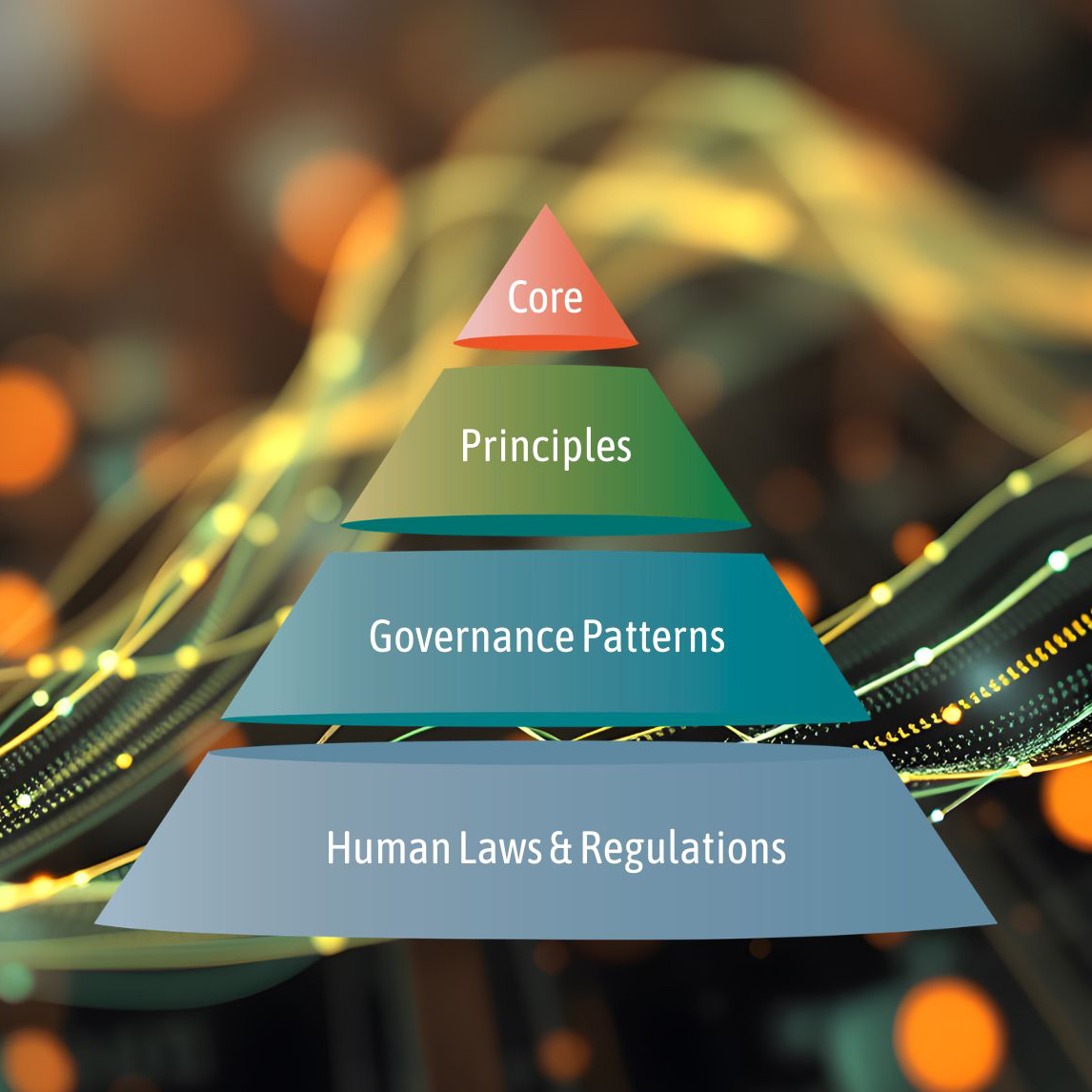

The Architecture: Introducing QGI The four-tiered architecture that moves governance from policy documents into the computational kernel of the machine.